Problem in a Nutshell

Mental health, which encompasses our emotional, psychological, and social well-being, is a vital part of our lives and impacts how we think, feel, and act. According to the National Institute of Mental Health, approximately 1 in 5 adults in the U.S. (46.6 million) experiences mental illness every year, and suicide is the 2nd leading cause of death for people between age 10 and 34. Mood Disorders, such as depression, is one of the most common mental health conditions affecting nearly 10% of adults each year, and can contribute to higher medical expenses, poorer performance at school and work, and increased risk of suicide if untreated.

Ability to recognize emotions and evaluate overall emotional healthiness can greatly improve mental health awareness and self-assessment, therefore, we propose an emotion recognition system that utilizes machine learning techniques to classify positive and negative emotions and provides result visualizations and recommendations through a web application.

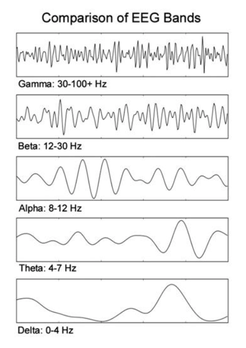

The approach we use to classify emotion is by collecting and analyzing electroencephalogram (EEG) brainwave data. EEG is a noninvasive monitoring method to record electrical activity of the brain and the signal recorded is typically divided into the following 5 frequency bands: delta, theta, alpha, beta, and gamma.

Ability to recognize emotions and evaluate overall emotional healthiness can greatly improve mental health awareness and self-assessment, therefore, we propose an emotion recognition system that utilizes machine learning techniques to classify positive and negative emotions and provides result visualizations and recommendations through a web application.

The approach we use to classify emotion is by collecting and analyzing electroencephalogram (EEG) brainwave data. EEG is a noninvasive monitoring method to record electrical activity of the brain and the signal recorded is typically divided into the following 5 frequency bands: delta, theta, alpha, beta, and gamma.

After EEG data is collected, time domain features are extracted from the raw data and used as input to the machine learning model which performs classification of the data. In order to train the machine learning model, we collected EEG data with positive and negative emotions by watching youtube videos with strong emotional impact. The trained model is then used in our web application to do real-time prediction of the user’s emotion. The prediction results are stored in remote database and provided to the user through visualizations.

Prior Work

“Integration of a Low Cost EEG Headset with The Internet of Thing Framework” [4] uses Mindwave Mobile as EEG sensor and collects data from 6 different participants to train emotion classification models. To obtain training data, the author uses short video stimulants to induce emotion and a self-assessment manikin to evaluate participants emotional state. The author uses the dimensional mode which classifies emotion into high arousal/positive valence, high arousal/negative valence, low arousal/positive valence, and low arousal/negative valence. 24 features are extracted from the EEG data, and Linear Discriminant Analysis is selected as the classifier model. The resulting accuracies range from 52.78% to 86.1%, with an average accuracy of 70.52%.